FPGA Neural Networks

Article FPGA Neural Networks The inference of neural networks on FPGA devices Introduction The ever-increasing connectivity in the world is generating ever-increasing levels of data.

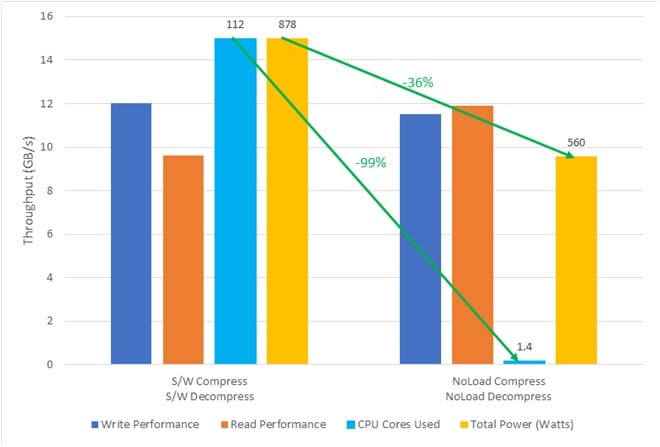

Write to storage 40× faster while keeping the same compression ratio as software

By accelerating in hardware, CPU usage is cut by 1/5th

Plug-in a Card or Module and Instantly Your Application is Using Hardware Compressed Storage

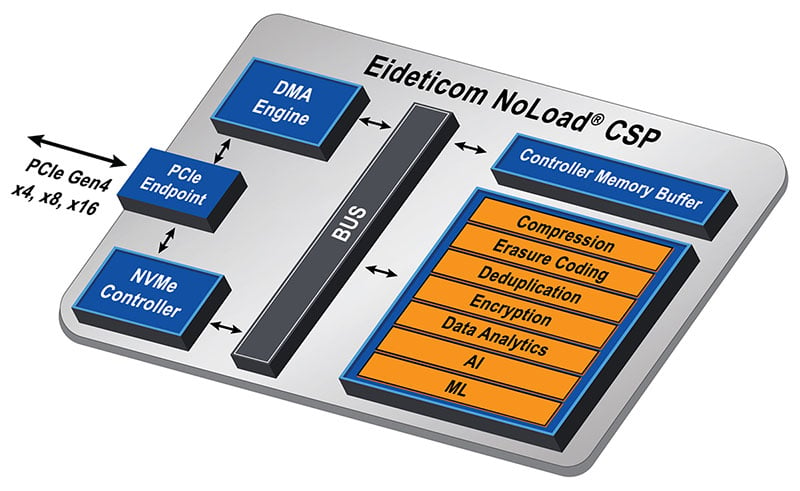

Created by Eideticom, and running on BittWare hardware, NoLoad CSPs are a combination of IP and hardware centered around accelerating storage. NoLoad is designed for several core features:

You can think of NoLoad as a framework that orchestrates a number of acceleration features like compression. Some use cases will utilize multiple acceleration features together. Because it’s software-defined hardware, building optimized systems doesn’t require an FPGA engineering team.

Adding a NoLoad CSP to existing storage systems is easy with a range of form factors including low-profile PCIe add-in-cards and U.2 modules. One or two of these then accelerate arrays of flash memory drives. All data movement is over native NVMe with high-performance peer-to-peer transfers.

Running on a range of modules and add-in-cards, the Transparent Compression IP is a CSS component of the NoLoad CSP. It provides compression services for the host application. When the hardware is implemented using the NoLoad filesystem (NoLoad FS), the user’s application doesn’t need to be modified to take advantage of the hardware compression offload.

NoLoad Transparent Compression is compared to software (CPU-based) compression and no compression.

The dataset used was Big Data (TeraGen), and the server utilized two IA-220-U2 acceleration modules. Compression was LZ4.

Benchmark results for Eideticom NoLoad solution on 4th Gen Intel Xeon Scalable processors and Altera Agilex 7 FPGA accelerator cards. Data sourced from Eideticom.

99% reduction in CPU cores used

36% reduction in total power

Watch Transparent Compression in Action!

How does NoLoad Transparent Compression reduce storage costs at a system level? We compare a system of 192TB SSDs with software compression versus adding two NoLoad Transparent Compression modules.

Software Compression (4× CPUs required to handle load)

With 2× NoLoad Modules

NoLoad runs on a range of FPGA-based accelerators from BittWare:

These part numbers order the configuration with NoLoad® pre-installed.

| Part Number | Description |

|---|---|

| IA-440i-0017 | Low-profile PCIe card |

| IA-220-U2-0002 | U.2 module |

Fill out the form to get in touch for details on NoLoad Transparent compression.

"*" indicates required fields

Article FPGA Neural Networks The inference of neural networks on FPGA devices Introduction The ever-increasing connectivity in the world is generating ever-increasing levels of data.

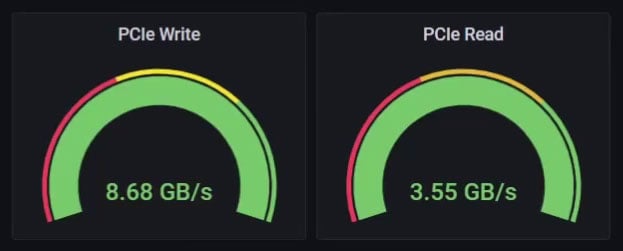

PCIe Gen4 data mover IP from Atomic Rules. Achieve up to 220 Gb/s using BittWare’s PCIe Gen4 cards, saving your development team when you need more performance than standard DMA. Features: DPDK and AXI standards, work with packets or any other data format, operate at any line rate up to 400 GbE.

IA-780i 400G + PCIe Gen5 Single-Width Card Compact 400G Card with the Power of Agilex The Intel Agilex 7 I-Series FPGAs are optimized for applications

BittWare customer OVHcloud built a powerful anti-DDoS solution using FPGA technology, specifically the XUP-P3R card.