FPGA Acceleration of Binary Weighted Neural Network Inference

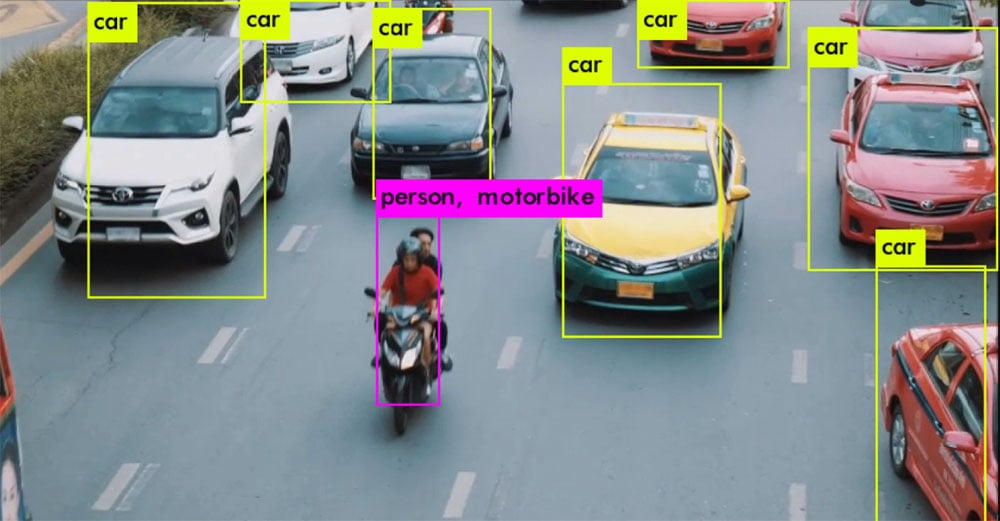

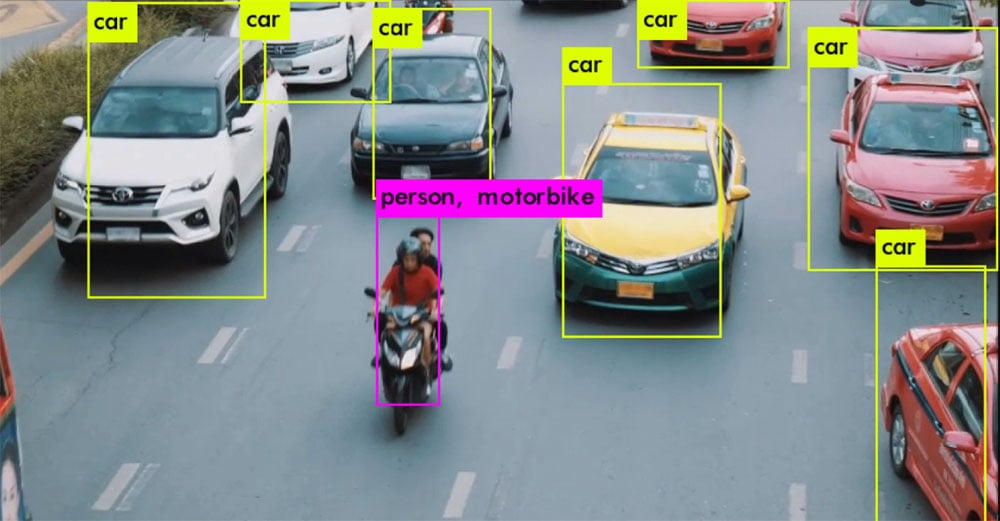

White Paper FPGA Acceleration of Binary Weighted Neural Network Inference One of the features of YOLOv3 is multiple-object recognition in a single image. We used

Join BittWare and Intel as we look at oneAPI™ with a focus on FPGAs. We will look at a real-world 2D FFT acceleration example which utilizes the Intel® Stratix® 10 MX including HBM2 memory on BittWare’s 520N-MX card.

"*" indicates required fields

Craig Petrie

Craig Petrie David Clarke

David Clarke Maurizio Paolini

Maurizio Paolini Richard Chamberlain

Richard Chamberlain

White Paper FPGA Acceleration of Binary Weighted Neural Network Inference One of the features of YOLOv3 is multiple-object recognition in a single image. We used

PCIe FPGA Card XUP-VV8 UltraScale+ FPGA PCIe Board with 4x QSFP-DDs 8x 100GbE Network Ports and VU9P/13P FPGA Need a Price Quote? Jump to Pricing

Go Back to IP & Solutions TCP/IP Offload Ethernet IP The TCP/IP (Transmission Control Protocol/ Internet Protocol) is an Ethernet IP core for FPGAs that

We examine our reference design for sustained 100 Gb/s capture to host DDR4 over a PCIe bus. Read the white paper, then request the App Note for even more detail!